By Oluwaseun Taiwo

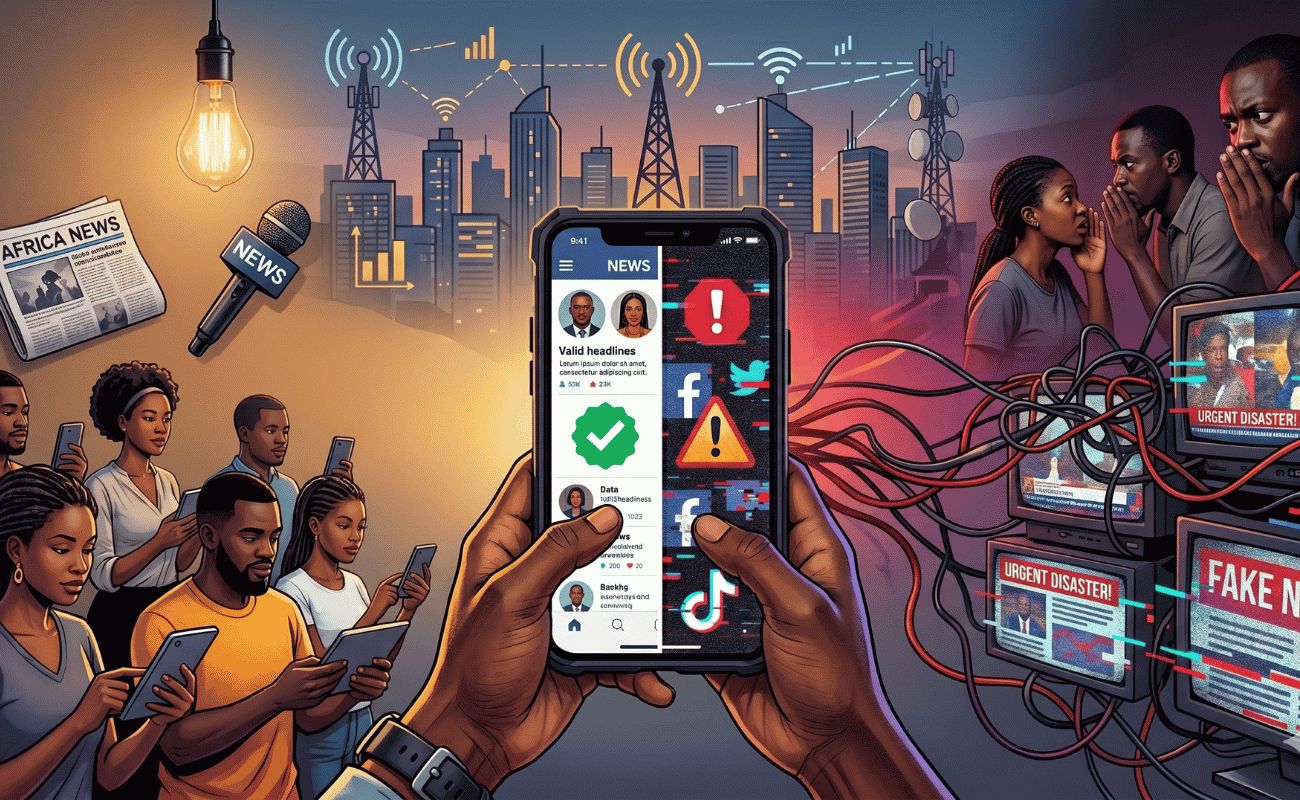

Scroll. Like. Share. Repeat. That is the rhythm of today’s information ecosystem, where a single post can travel across borders in seconds, shape opinions, and influence political outcomes. Social media has transformed how people engage with news, governance, and democracy across Africa, opening spaces that were once controlled by traditional media. Yet, in doing so, it has also blurred a crucial line: the distinction between information, misinformation, and disinformation. Understanding these differences is essential because they sit at the heart of a growing crisis of trust that increasingly affects electoral processes and democratic systems across the continent.

At its simplest, information refers to content that is accurate, verified, and grounded in fact. It is the kind of content that credible journalism seeks to produce and that citizens depend on to make informed decisions. When an electoral commission releases official election results, or when a reputable media house reports verified voter turnout figures, that is information. It strengthens public confidence, supports participation, and reinforces democratic accountability. However, in today’s digital environment, information no longer exists in isolation; it competes constantly with distorted and manipulated versions of reality.

Misinformation emerges when false or inaccurate content is shared without the intention of deceiving. It often spreads because individuals trust the source or fail to verify what they see. For instance, during an election period, a message might circulate on WhatsApp claiming that voting has been postponed in a particular area. A well-meaning citizen, believing the message to be true, forwards it to others. Although there is no deliberate intent to cause harm, the consequences can be significant: voters may stay home, confusion may spread, and participation may be undermined. Misinformation, therefore, disrupts democratic processes quietly but effectively, driven not by malice but by speed and trust in personal networks.

Disinformation, by contrast, is intentional and strategic. It involves the deliberate creation and dissemination of false information with the aim of misleading people, shaping perceptions, or influencing outcomes. During elections, this can take many forms: fabricated results shared before official announcements, doctored videos portraying candidates in a false light, or coordinated narratives designed to inflame ethnic or political tensions. Unlike misinformation, disinformation is calculated. It is often backed by organised efforts that exploit social divisions and target vulnerable audiences. Its goal is not just to confuse, but to manipulate.

From a critical standpoint, the problem is not merely that false information exists, it has always existed but that the architecture of social media actively rewards its spread. Platforms are designed to maximise attention, and in doing so, they elevate content that provokes strong reactions. Accuracy becomes secondary to engagement. This raises an uncomfortable question:

Are social media platforms neutral spaces for communication, or are they active participants in shaping political realities?

In my view, they are no longer passive tools. Their algorithms, design choices, and moderation policies play a direct role in determining what people see, believe, and ultimately act upon.

Across Africa, electoral periods illustrate this challenge vividly. In countries like Nigeria, election cycles have been accompanied by waves of misleading and false content, including unverified claims. Even when corrections are issued, they rarely travel as far or as fast as the original falsehoods. This imbalance reflects a deeper structural issue: truth requires verification, and verification takes time, while falsehood thrives on immediacy.

This dynamic contributes to a broader erosion of trust. Democracy depends fundamentally on trust in institutions, trust in electoral processes, and trust in the information that informs citizens’ choices. When misinformation and disinformation dominate the information space, that trust begins to weaken. Citizens may start to question the legitimacy of elections, doubt the credibility of the media, or lose confidence in public institutions altogether. In extreme cases, this distrust can lead to disengagement, protests, or even violence, threatening the stability of democratic systems.

It is also important to acknowledge that the crisis of trust is not solely the fault of social media or its users. Governments and political actors sometimes contribute to the problem by manipulating information, dismissing credible reporting, or failing to communicate transparently. When official channels are inconsistent or lack credibility, citizens are more likely to turn to informal networks for information, where misinformation and disinformation thrive. In this sense, the information disorder we see today is as much a governance issue as it is a technological one.

Journalism, in this context, occupies a critical yet increasingly contested space. Traditional media is no longer the sole gatekeeper of information; it now competes with influencers, partisan actors, and anonymous accounts that often prioritize speed or agenda over accuracy. While journalists continue to provide verified and reliable reporting, their reach is frequently overshadowed by viral content. Moreover, journalists themselves can become targets of disinformation campaigns aimed at discrediting their work. Despite these challenges, I would argue that journalism remains one of the strongest defenses against information disorder but only if it adapts. It must not only report facts but also actively engage audiences, explain context, and rebuild trust in an environment saturated with doubt.

Understanding why misinformation and disinformation spread also requires a reflection on human behavior. People are more likely to believe and share content that aligns with their existing views or identities. Social media reinforces this tendency by creating echo chambers where users are primarily exposed to like-minded perspectives. Emotional appeal further amplifies this dynamic; content that evokes fear, anger, or urgency is more likely to be shared, regardless of its accuracy. Disinformation campaigns exploit these patterns, crafting messages that feel convincing even when they are entirely false. This suggests that the challenge is not only technological but deeply psychological.

In my view, addressing this issue demands more than technical fixes or regulatory interventions. While platforms must take greater responsibility for the content they amplify, and governments must avoid heavy-handed censorship, the long-term solution lies in building a culture of critical engagement. Citizens must move from being passive consumers of information to active evaluators of it. This requires investment in digital literacy, education, and public awareness particularly in the context of elections, where the stakes are highest.

Ultimately, the integrity of Africa’s democratic processes depends not only on how votes are cast and counted, but also on the quality of information that surrounds them. Social media has made participation more accessible, but it has also made truth more contested. If trust continues to erode, the consequences will extend far beyond individual elections, shaping how citizens relate to governance, institutions, and one another.

The question, then, is not simply whether information is true or false, but whether societies can sustain a shared sense of reality in an age of endless content. Protecting that shared reality is no longer the responsibility of journalists or institutions alone. It is a collective task, one that requires accountability from platforms, transparency from governments, integrity from the media, and critical awareness from citizens. Only then can trust be rebuilt, and only then can democracy function as it should.